People sometimes have trouble making small sacrifices now that will reward them handsomely later. How often do we ignore the advice to make a few diet and exercise changes to live a longer, healthier life? Or to put some money aside to grow into a nest egg? Intellectually, we get it — but instant gratification is a powerful force. You don’t have to be one of those self-defeating rubes. Start buying LED light bulbs. You’ve probably seen LED flashlights, the LED “flash” on phone cameras and LED indicator lights on electronics. But LED bulbs, for use in the lamps and light sockets of your home, have been slow to arrive, mainly because of their high price: their electronics and heat-management features have made them much, much more expensive than other kinds of bulbs. That’s a pity, because LED bulbs are a gigantic improvement over incandescent bulbs and even the compact fluorescents, or CFLs, that the world spent several years telling us to buy. LEDs last about 25 times as long as incandescents and three times as long as CFLs; we’re talking maybe 25,000 hours of light. Install one today, and you may not own your house, or even live, long enough to see it burn out. (Actually, LED bulbs generally don’t burn out at all; they just get dimmer.) You know how hot incandescent bulbs become. That’s because they convert only 5 to 10 percent of your electricity into light; they waste the rest as heat. LED bulbs are far more efficient. They convert 60 percent of their electricity into light, so they consume far less electricity. You pay less, you pollute less. But wait, there’s more: LED bulbs also turn on to full brightness instantly. They’re dimmable. The light color is wonderful; you can choose whiter or warmer bulbs. They’re rugged, too. It’s hard to break an LED bulb, but if the worst should come to pass, a special coating prevents flying shards. Yet despite all of these advantages, few people install LED lights. They never get farther than: “$30 for a light bulb? That’s nuts!” Never mind that they will save about $200 in replacement bulbs and electricity over 25 years. (More, if your electric company offers LED-lighting rebates.) Surely there’s some price, though, where that math isn’t so off-putting. What if each bulb were only $15? Or $10? Well, guess what? We’re there. LED bulbs now cost less than $10. Nor is that the only recent LED breakthrough. The light from an LED bulb doesn’t have to be white. Several companies make bulbs that can be any color you want. I tried out a whole Times Square’s worth of LED bulbs and kits from six manufacturers. May these capsule reviews shed some light on the latest in home illumination. 3M ADVANCED LED BULBS On most LED bulbs, heat-dissipating fins adorn the stem. (The glass of an LED bulb never gets hot, but the circuitry does. And the cooler the bulb, the better its efficiency.) As a result, light shines out only from the top of the bulb. But the 3M bulbs’ fins are low enough that you get lovely, omnidirectional light. These are weird-looking, though, with a strange reflective material in the glass and odd slots on top. You won’t care about aesthetics if the bulb is hidden in a lamp, but $25 each is unnecessarily expensive; read on. CREE LED BULBS Cree’s new home LED bulbs, available at Home Depot, start at $10 apiece, or $57 for a six-pack. That’s about as cheap as they come. The $10 bulb provides light equivalent to that from a 40-watt incandescent. Cree’s 60-watt equivalent is $14 for “daylight” light, $13 for warmer light. The great thing about these bulbs is that they look almost exactly like incandescent bulbs. Cree says that its bulbs are extraordinarily efficient; its “60-watt” daylight bulb consumes only nine watts of juice (compared with 13 watts on the 3M, for example). As a result, this bulb runs cooler, so its heat sink can be much smaller and nicer looking. TORCHSTAR These color-changeable light bulbs (available on Amazon) range from $10 for a tracklight-style spotlight to $23 for a more omnidirectional bulb. Each comes with a flat, plastic remote control that can be used to dim the lights, turn them on and off, or change their color (the remote has 15 color buttons). You can also make them pulse, flash or strobe, which is totally annoying. The TorchStars never get totally white — only a feeble blue — and they’re not very bright. But you get the point: LED bulbs can do more than just turn on and be white. PHILIPS HUE For $200, you get a box with three flat-top bulbs and a round plastic transmitter, which plugs into your network router. At that point, you can control both the brightness and colors of these lights using an iPhone or Android phone app, either in your home or from across the Internet, manually or on a schedule. It offers icons for predefined combinations like Sunset (all three bulbs are orange) and Deep Sea (each bulb is a different underwaterish color). You can also create your own color schemes — by choosing a photo whose tones you want reproduced. You can dim any bulb, or turn them all off at once from your phone. (Additional bulbs, up to 500, are $60 each.) Philips gets credit for doing something fresh with LED technology; the white color is pure and bright; and it’s a blast to show them off for visitors. Still, alas, the novelty wears off fairly quickly. INSTEON This kit ($130 for the transmitter, $30 for each 60-watt-equivalent bulb) is a lot like Philips’s, except that there’s no color-changing; you just use the phone app to control the white lights, individually or en masse. Impressively, each bulb consumes only 8 watts. You can expand the system up to 1,000 bulbs, if you’re insane. Unfortunately, the prerelease version I tested was a disaster. Setup was a headache. You had to sign up for an account. The instructions referred to buttons that didn’t exist. You had to “pair” each bulb with the transmitter individually. Once paired, the bulbs frequently fell off the network entirely. Bleah. GREENWAVE SOLUTION This control-your-LED-lights kit doesn’t change colors, but you get four bulbs, not three, in the $200 kit. You get both a network transmitter and a remote control that requires neither network nor smartphone. Up to 500 bulbs (a reasonable $20 each) can respond. Setting up remote control over the Internet is easy. The app is elegant and powerful. It has presets like Home, Away and Night, which turns off all lights in the house with one tap. You can also program your own schedules, light-bulb groups and dimming levels. Unfortunately, these are only “40-watt” bulbs. Worse, each has a weird cap on its dome; in other words, light comes out only in a band around the equator of each bulb. They’re not omnidirectional. The bottom line: Choose the Cree bulbs for their superior design and low price, Philips Hue to startle houseguests, or the GreenWave system for remote control of all the lights in your house. By setting new brightness-per-watt standards that the 135-year-old incandescent technology can’t meet, the federal government has already effectively banned incandescent bulbs. And good riddance to CFL bulbs, with those ridiculous curlicue tubes and dangerous chemicals inside. LED bulbs last decades, save electricity, don’t shatter, don’t burn you, save hundreds of dollars, and now offer plummeting prices and blossoming features. What’s not to like? You’d have to be a pretty dim bulb not to realize that LED light is the future. Image Credit and Source

Pesticides are used to kill the crop invaders. These pesticides are sprayed on the crops where they remain. These crops are sold to the public and used in the production of animal feed and other byproducts. The crops are the same ones we buy at market to eat. The bugs are gone but the chemicals are not. We are ingesting these chemicals which were used to kill living organisms. These chemicals reach the colon and remain there, making the colon toxic and slowly poisoning the body. The World Health Organization created the Codex Alimentarius Commission (CAC). Its purpose was to create international guidelines for food safety. In the face of these guidelines, despite their stated focus of protecting the consumers, the Codex Commission approved seven of the most toxic chemical compounds known to man for use as pesticides. Further, they seem to be unconcerned about the pervasive use of these chemicals in animal feed and byproducts. The seven dangerous chemicals approved by the Codex Commission are often referred to Persistent Organic Pollutants (POP). "Persistent" because they aren't expelled easily, or at all without help. Following a trail, it's sprayed on crops as pesticides. These crops are used in the preparation of feed and produce which is marketed to humans. Animals are eating the byproducts and humans are eating the produce and both are retaining the chemical in their bodies. Then the humans eat the animals and get dosed again with the chemical. Humans have all these toxins in their bodies and are slowly being poisoned. And it's not just land creatures. Organochlorine, one commonly used POP, runs off from the land into bodies of water, and may be responsible for contaminating the world's seafood supply. Organochlorine collects in the fatty tissue and so fish we heretofore ate for their essential fatty acids are becoming unsafe to eat in regular quantities. Washing and pealing don't clear it away completely. Washing doesn't get everything off. Still you need to wash all fresh fruit and vegetables to clean them as much as possible. Pealing doesn't get everything off because it can grow through the vegetables. The other problem with pealing is that many of the nutrients that we want from the fruit are stored in the skin, so pealing reduces the benefit to your body. How to Eliminate Toxins from Pesticides - Avoid crops items containing the highest levels of pesticide residues, like strawberries, peaches, celery. Use only the organically grown ones.

- Grow your own food organically to protect your family from commercial pesticides.

- Avoid chemical based pesticides. Visit OrganicPesticides.com for natural alternatives.

- Cleanse your intestinal tract 2 to 3 times weekly to prevent the accumulation of toxins in the colon which can seep into the bloodstream.

- Know what you can and cannot eat safely: www.ewg.org is a resource for learning which foods contain pesticides.

Recommended Reading

Four solar homes built by students at Missouri University of Science and Technology will soon become home to an experimental microgrid to manage and store renewable energy. The houses, all past entries into the Solar Decathlon design competition, make up the university's Solar Village. In its initial phase, the project involves Missouri S&T students and researchers, along with representatives from utility companies, the Army Corps of Engineers and several Missouri businesses. The goal is to demonstrate the feasibility of small-scale microgrids for future use. "Distributed power generation is one of the key elements of a microgrid. In our case, we're using solar panels," says Dr. Mehdi Ferdowsi, associate professor of electrical and computer engineering at Missouri S&T. "It's called a microgrid because it's less dependent on the utility power grid. The idea is that if there is a blackout, it can operate in what we call 'islanded mode,' and convert to using stored solar energy. "Utility companies are interested to see if this could be a viable business model for the future," he says. "For example, they could rent out renewable energy generators to subdivisions, creating a new paradigm for selling electricity." Ferdowsi says that Missouri S&T's Solar Village is an ideal place to test microgrid technology. "The four houses were built in a 10-year span of time and each was designed individually, but converting them to the technology is not complicated," he says. Students living in the solar houses will monitor the results. "We hope to demonstrate that the technology is expandable to many, based on these four houses," he says. "The students will also demonstrate the human aspect of the project -- how people interact with a new system of energy management." Components necessary for the project include batteries for energy storage, a power electronic converter, software and hardware. Two lithium battery racks were donated by A123 Systems Inc. (now Wanxiang Group) in December. Ferdowsi estimates their combined worth at $75,000 to $100,000. "These batteries are very efficient, but they are super heavy with 8-foot-tall racks," he says. "We hope to have them installed in a shed in the Solar Village by the end of summer, along with the converter." The hardware and software would be located in the houses. Photovoltaic (PV) arrays on the solar homes are designed to generate about 25 kilowatts of power. The donated batteries will provide 60 kilowatt hours of energy storage for the microgrid. Researchers are now deciding which converter and intelligence system to purchase. "Security is also a factor -- we want to be sure the system is hacker-proof," says Ferdowsi. Several Missouri S&T alumni serve on the advisory council that was created to guide the integration of microgrid components into the Solar Village, and to ensure the microgrid is designed for future expansions. One, alumnus Brent McKinney, manager of electrical transmission with City Utilities of Springfield (Mo.), helped facilitate a $75,000 grant for the project through the American Public Power Association. The grant will help fund battery array installation and graduate student research in community energy storage. Dr. Fatih Dogan, professor of materials science and engineering at S&T, has been working with St. Louis-based utility company Ameren, which plans to provide and install a residential fuel cell and heat recovery demonstration unit in the village. The fuel cell will serve as an additional microgrid component. Future expansion plans include incorporating a wind turbine, generators, electric vehicles and an electric vehicle charging infrastructure. "There is so much potential in this project, and so many groups that can benefit from it," says Angela Rolufs, director of the office of sustainable energy and environmental engagement at Missouri S&T, which manages the Solar Village. "We had this great idea and all the pieces for it -- we just needed some help making it happen." SourceImage Credit

Tire recycling represents an untapped opportunity, that may prove a success if processing costs do not become prohibitive Europe's tire waste production is 3 million tonnes per year. Currently 65% to 70% of used tires end up in landfills. Not only are they causing environmental damage, but a loss of added value in the form of new products that recycling can generate. One of the approaches for recycling tires is now being investigated in a EU funded project called TyGRE. tires offer recycling potentials because they have a better heating value than biomass or coal, and they contain a high content of volatile gasses. They can therefore be an interesting source of synthetic fuels, also called synfuels, according to Sabrina Portofina, a researcher at the Italian national agency for new technologies, energy and sustainable economic development, ENEA, in Portici, near Naples. As part of the project, she is conducting an experiment to analyse a thermal process to recuperate synthesis gas, also called syngas, and solid materials from the tire scrap. The research project consists of two components. First, it investigates the pyrolysis of the tire material to extract the volatile gasses that form the syngas. Second, it is looking into the use of the formed char to produce other materials, most importantly, silicon carbide, a material used in the manufacture of ceramic materials and in electronic applications. The first stage of the experimental process set up at ENEA consists of a heat treatment of the tire scrap. This process involves injecting the scrap, together with steam, in a reactor and in heating it up to almost 1,000 degrees Celsius. Although the heating requires energy, it will be recovered by the energy contained in the produced syngas; a mixture of mainly hydrogen, carbon monoxide and dioxide, and methane. This gas can be used as a fuel -- having a similar heating capacity to natural gas -- but also as a starter material for the production of other by-products. Such by-products are what add the most value to the recycling process. They are viewed as a "must." Solid carbon is collected after the gasification as a basis for the productions of these by-products. "To increase the added value of the gasification we decided to include the production of products such as silicon carbide," says Portofino. The carbon would react with silicon oxide at high temperature to form the silicon carbide. Recycling tires to create fuels only is not promising, but having silicon carbide as an added by-product is a good choice, according to Valerie Shulman, Secretary General of the European tire Recycling Association, ETRA. "Silicon carbide is one of the materials of the future, it is used in metallurgy, in ceramics, and in a variety other products. It is quite expensive to produce but you can get from 1,200 to 3,000 Euro a tonne," she says. Some experts are sceptical regarding the cost effectiveness of this process, however. "I think the cost is too high, and you have to use a granulate that is expensive," comments Juan Antonio Tejela Otero, an engineer and sales manager at Renecal, a tire recycling company in Guardo, in the Palencia province of Spain. A prototype plant is now under construction at the ENEA facilities in Trisaia in Southern Italy. It is expected to be in operation at the end of March. It will process about 30 kg of tire waste per hour. Operating the prototype will establish how sustainable the TyGRE recycling scheme will be. Portofino concludes: "We will then be able to do the energy balance of the whole process." On the web: www.innovationseeds.eu

Genes from the family of bacteria that produce vinegar, Kombucha tea and nata de coco have become stars in a project -- which scientists today said has reached an advanced stage -- that would turn algae into solar-powered factories for producing the "wonder material" nanocellulose. Their report on advances in getting those genes to produce fully functional nanocellulose was part of the 245th National Meeting & Exposition of the American Chemical Society (ACS), the world's largest scientific society, being held here this week. "If we can complete the final steps, we will have accomplished one of the most important potential agricultural transformations ever," said R. Malcolm Brown, Jr., Ph.D. "We will have plants that produce nanocellulose abundantly and inexpensively. It can become the raw material for sustainable production of biofuels and many other products. While producing nanocellulose, the algae will absorb carbon dioxide, the main greenhouse gas linked to global warming." Brown, who has pioneered research in the field for more than 40 years, spoke at the First International Symposium on Nanocellulose, part of the ACS meeting. Abstracts of the presentations appear below. Cellulose is the most abundant organic polymer on Earth, a material, like plastics, consisting of molecules linked together into long chains. Cellulose makes up tree trunks and branches, corn stalks and cotton fibers, and it is the main component of paper and cardboard. People eat cellulose in "dietary fiber," the indigestible material in fruits and vegetables. Cows, horses and termites can digest the cellulose in grass, hay and wood. Most cellulose consists of wood fibers and cell wall remains. Very few living organisms can actually synthesize and secrete cellulose in its native nanostructure form of microfibrils. At this level, nanometer-scale fibrils are very hydrophilic and look like jelly. A nanometer is one-millionth the thickness of a U.S. dime. Nevertheless, cellulose shares the unique properties of other nanometer-sized materials -- properties much different from large quantities of the same material. Nanocellulose-based materials can be stronger than steel and stiffer than Kevlar. Great strength, light weight and other advantages has fostered interest in using it in everything from lightweight armor and ballistic glass to wound dressings and scaffolds for growing replacement organs for transplantation. In the 1800s, French scientist Louis Pasteur first discovered that vinegar-making bacteria make "a sort of moist skin, swollen, gelatinous and slippery" -- a "skin" now known as bacterial nanocellulose. Nanocellulose made by bacteria has advantages, including ease of production and high purity that fostered the kind of scientific excitement reflected in the first international symposium on the topic, Brown pointed out. Brown recalled that in 2001, a discovery by David Nobles, Ph.D., a member of the research team at the University of Texas at Austin, refocused their research on nanocellulose, but with a different microbe. Nobles established that several kinds of blue-green algae, which are mainly photosynthetic bacteria much like the vinegar-making bacteria in basic structure; however, these blue-green algae, or cyanobacteria, as they are called, can produce nanocellulose. One of the largest problems with cyanobacterial nanocellulose is that it is not made in abundant amounts in nature. If it could be scaled up, Brown describes this as "one of the most important discoveries in plant biology." Since the 1970s, Brown and colleagues began focusing on Acetobacter xylinum ( A. xylinum), a bacterium that secretes nanocellulose directly into the culture medium, and using it as an ideal model for future research. Other members of the Acetobacter family find commercial uses in producing vinegar and other products. In the 1980s and 1990s, Brown's team sequenced the first nanocellulose genes from A. xylinum. They also pinpointed the genes involved in polymerizing nanocellulose (linking its molecules together into long chains) and in crystallizing (giving nanocellulose the final touches needed for it to remain stable and functional). But Brown also recognized drawbacks in using A. xylinum or other bacteria engineered with those genes to make commercial amounts of nanocellulose. Bacteria, for instance, would need a high-purity broth of food and other nutrients to grow in the huge industrial fermentation tanks that make everything from vinegar and yogurt to insulin and other medicines. Those drawbacks shifted their focus on engineering the A. xylinum nanocellulose genes into Nobles' blue-green algae. Brown explained that algae have multiple advantages for producing nanocellulose. Cyanobacteria, for instance, make their own nutrients from sunlight and water, and remove carbon dioxide from the atmosphere while doing so. Cyanobacteria also have the potential to release nanocellulose into their surroundings, much like A. xylinum, making it easier to harvest. In his report at the ACS meeting, Brown described how his team already has genetically engineered the cyanobacteria to produce one form of nanocellulose, the long-chain, or polymer, form of the material. And they are moving ahead with the next step, engineering the cyanobacteria to synthesize a more complete form of nanocellulose, one that is a polymer with a crystalline architecture. He also said that operations are being scaled up, with research moving from laboratory-sized tests to larger outdoor facilities. Brown expressly pointed out that one of the major barriers to commercializing nanocellulose fuels involves national policy and politics, rather than science. Biofuels, he said, will face a difficult time for decades into the future in competing with the less-expensive natural gas now available with hydraulic fracturing, or "fracking." In the long run, the United States will need sustainable biofuels, he said, citing the importance of national energy policies that foster parallel development and commercialization of biofuels. Image Credit and Source

Vitamin C is perhaps best known for its ability to strengthen the immune system. But this potent nutrient also has many other important roles that control significant aspects of our health. When we get enough in our diets, vitamin C helps detoxify our bodies, promotes healing of all of our cells, and allows us to better deal with stress. It also supports the good bacteria in our gut, destroys detrimental bacteria and viruses, neutralizes harmful free radicals, removes heavy metals, protects us from pollution, and much more. Unfortunately, most Americans are not getting anywhere near enough of this vitamin to experience these health benefits. That's especially true for our children. One reason why we fall so short is that our diet simply does not consist of nearly enough raw fruits and vegetables. Another reason is that the RDA of 90 mg for vitamin C is set much too low, which is the same problem we see with vitamin D. Such a low RDA leads people into a false sense of security that they are meeting their daily requirements. It also makes them wary of taking the much higher dosages that are required for good health. So the question becomes just how much vitamin C does a human need? A good starting point is to look at animals that are able to synthesize their own vitamin C. All animals except humans, primates, guinea pigs, and a handful of other species are able to make their own vitamin C. We know that the vast majority of animals make approximately 30 mg per kg of body weight. That works out to be about 2 grams of vitamin C for a 150 pound person. We also know that when animals are under stress, injured, or sick, they can make up to ten times more vitamin C than their normal daily requirements. Since humans are unable to make vitamin C, we must get it from our diets. When the differences in body weights are equalized, primates and guinea pigs consume 20 to 80 times the RDA suggested amount. The great apes, our closest living relatives, require anywhere from 2-6 grams (2,000 - 6,000 mg) of Vitamin C per day under normal healthy conditions. How much we humans need can be a bit more complicated, as it depends on many variables such as diet, age, stress level, amount of exposure to pollutants, amount of medications we take, and overall health. A generic amount is around 1-4 grams per day for a healthy individual. People with serious illnesses will need much, much more. Excellent food sources of this potent nutrient include rose hips, acerola cherries, and camu camu fruit. More common produce such as chili peppers, red peppers, parsley, kiwifruit, and broccoli are also good sources. It is important to note that most of the vitamin C in foods will be destroyed with cutting, cooking, storing, and other forms of processing. As far as supplements are concerned, natural vitamin C complexes are much more potent than the common and less expensive ascorbate forms. However, someone that needs a lot of vitamin C will find that the natural complexes can be cost prohibitive. Mineral ascorbates and ascorbic acid are acceptable forms to take for reaping all of vitamin C's many health benefits. Just be sure to look for vitamin C supplements that are non-GMO, as the vast majority of these supplements come from GMO corn. Buy from us and support our site.. Vitamin C-Ascorbic Acid 1000 mg $14.95 USD Source: http://www.naturalnews.com/032027_vitamin_C_immune_system.html#ixzz2Qsc5syhm

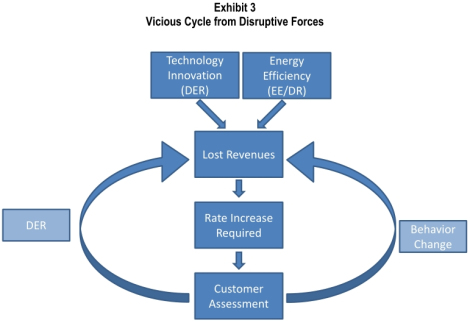

Solar power and other distributed renewable energy technologies could lay waste to U.S. power utilities and burn the utility business model, which has remained virtually unchanged for a century, to the ground. That is not wild-eyed hippie talk. It is the assessment of the utilities themselves. Back in January, the Edison Electric Institute — the (typically stodgy and backward-looking) trade group of U.S. investor-owned utilities — released a report [PDF] that, as far as I can tell, went almost entirely without notice in the press. That’s a shame. It is one of the most prescient and brutally frank things I’ve ever read about the power sector. It is a rare thing to hear an industry tell the tale of its own incipient obsolescence. I’ve been thinking about how to convey to you, normal people with healthy social lives and no time to ponder the byzantine nature of the power industry, just what a big deal the coming changes are. They are nothing short of revolutionary … but rather difficult to explain without jargon. So, just a bit of background. You probably know that electricity is provided by utilities. Some utilities both generate electricity at power plants and provide it to customers over power lines. They are “regulated monopolies,” which means they have sole responsibility for providing power in their service areas. Some utilities have gone through deregulation; in that case, power generation is split off into its own business, while the utility’s job is to purchase power on competitive markets and provide it to customers over the grid it manages. This complexity makes it difficult to generalize about utilities … or to discuss them without putting people to sleep. But the main thing to know is that the utility business model relies on selling power. That’s how they make their money. Here’s how it works: A utility makes a case to a public utility commission (PUC), saying “we will need to satisfy this level of demand from consumers, which means we’ll need to generate (or purchase) this much power, which means we’ll need to charge these rates.” If the PUC finds the case persuasive, it approves the rates and guarantees the utility a reasonable return on its investments in power and grid upkeep. Thrilling, I know. The thing to remember is that it is in a utility’s financial interest to generate (or buy) and deliver as much power as possible. The higher the demand, the higher the investments, the higher the utility shareholder profits. In short, all things being equal, utilities want to sell more power. (All things are occasionally not equal, but we’ll leave those complications aside for now.) Now, into this cozy business model enters cheap distributed solar PV, which eats away at it like acid. First, the power generated by solar panels on residential or commercial roofs is not utility-owned or utility-purchased. From the utility’s point of view, every kilowatt-hour of rooftop solar looks like a kilowatt-hour of reduced demand for the utility’s product. Not something any business enjoys. (This is the same reason utilities are instinctively hostile to energy efficiency and demand response programs, and why they must be compelled by regulations or subsidies to create them. Utilities don’t like reduced demand!) It’s worse than that, though. Solar power peaks at midday, which means it is strongest close to the point of highest electricity use — “peak load.” Problem is, providing power to meet peak load is where utilities make a huge chunk of their money. Peak power is the most expensive power. So when solar panels provide peak power, they aren’t just reducing demand, they’re reducing demand for the utilities’ most valuable product. But wait. Renewables are limited by the fact they are intermittent, right? “The sun doesn’t always shine,” etc. Customers will still have to rely on grid power for the most part. Right? This is a widely held article of faith, but EEI (of all places!) puts it to rest. (In this and all quotes that follow, “DER” means distributed energy resources, which for the most part means solar PV.) Due to the variable nature of renewable DER, there is a perception that customers will always need to remain on the grid. While we would expect customers to remain on the grid until a fully viable and economic distributed non-variable resource is available, one can imagine a day when battery storage technology or micro turbines could allow customers to be electric grid independent. To put this into perspective, who would have believed 10 years ago that traditional wire line telephone customers could economically “cut the cord?” [Emphasis mine.] Indeed! Just the other day, Duke Energy CEO Jim Rogers said, “If the cost of solar panels keeps coming down, installation costs come down and if they combine solar with battery technology and a power management system, then we have someone just using [the grid] for backup.” What happens if a whole bunch of customers start generating their own power and using the grid merely as backup? The EEI report warns of “irreparable damages to revenues and growth prospects” of utilities. Utility investors are accustomed to large, long-term, reliable investments with a 30-year cost recovery — fossil fuel plants, basically. The cost of those investments, along with investments in grid maintenance and reliability, are spread by utilities across all ratepayers in a service area. What happens if a bunch of those ratepayers start reducing their demand or opting out of the grid entirely? Well, the same investments must now be spread over a smaller group of ratepayers. In other words: higher rates for those who haven’t switched to solar. That’s how it starts. These two paragraphs from the EEI report are a remarkable description of the path to obsolescence faced by the industry: The financial implications of these threats are fairly evident. Start with the increased cost of supporting a network capable of managing and integrating distributed generation sources. Next, under most rate structures, add the decline in revenues attributed to revenues lost from sales foregone. These forces lead to increased revenues required from remaining customers … and sought through rate increases. The result of higher electricity prices and competitive threats will encourage a higher rate of DER additions, or will promote greater use of efficiency or demand-side solutions. Increased uncertainty and risk will not be welcomed by investors, who will seek a higher return on investment and force defensive-minded investors to reduce exposure to the sector. These competitive and financial risks would likely erode credit quality. The decline in credit quality will lead to a higher cost of capital, putting further pressure on customer rates. Ultimately, capital availability will be reduced, and this will affect future investment plans. The cycle of decline has been previously witnessed in technology-disrupted sectors (such as telecommunications) and other deregulated industries (airlines). Did you follow that? As ratepayers opt for solar panels (and other distributed energy resources like micro-turbines, batteries, smart appliances, etc.), it raises costs on other ratepayers and hurts the utility’s credit rating. As rates rise on other ratepayers, the attractiveness of solar increases, so more opt for it. Thus costs on remaining ratepayers are even further increased, the utility’s credit even further damaged. It’s a vicious, self-reinforcing cycle:

One implication of all this — a poorly understood implication — is that rooftop solar fucks up the utility model even at relatively low penetrations, because it goes straight at utilities’ main profit centers. (It’s already happening in Germany.) Right now, distributed solar PV is a relatively tiny slice of U.S. electricity, less than 1 percent. For that reason, utility investors aren’t paying much attention. “Despite the risks that a rapidly growing level of DER penetration and other disruptive challenges may impose,” EEI writes, “they are not currently being discussed by the investment community and factored into the valuation calculus reflected in the capital markets.” But that 1 percent is concentrated in a small handful of utility districts, so trouble, at least for that first set of utilities, is just over the horizon. Utility investors are sleepwalking into a maelstrom. (“Despite all the talk about investors assessing the future in their investment evaluations,” the report notes dryly, “it is often not until revenue declines are reported that investors realize that the viability of the business is in question.” In other words, investors aren’t that smart and rational financial markets are a myth.) Bloomberg Energy Finance forecasts 22 percent compound annual growth in all solar PV, which means that by 2020 distributed solar (which will account for about 15 percent of total PV) could reach up to 10 percent of load in certain areas. If that happens, well: Assuming a decline in load, and possibly customers served, of 10 percent due to DER with full subsidization of DER participants, the average impact on base electricity prices for non-DER participants will be a 20 percent or more increase in rates, and the ongoing rate of growth in electricity prices will double for non-DER participants (before accounting for the impact of the increased cost of serving distributed resources). So rates would rise by 20 percent for those without solar panels. Can you imagine the political shitstorm that would create? (There are reasons to think EEI is exaggerating this effect, but we’ll get into that in the next post.) If nothing is done to check these trends, the U.S. electric utility as we know it could be utterly upended. The report compares utilities’ possible future to the experience of the airlines during deregulation or to the big monopoly phone companies when faced with upstart cellular technologies. In case the point wasn’t made, the report also analogizes utilities to the U.S. Postal Service, Kodak, and RIM, the maker of Blackberry devices. These are not meant to be flattering comparisons. Remember, too, that these utilities are not Google or Facebook. They are not accustomed to a state of constant market turmoil and reinvention. This is a venerable old boys network, working very comfortably within a business model that has been around, virtually unchanged, for a century. A friggin’ century, more or less without innovation, and now they’re supposed to scramble and be all hip and new-age? Unlikely. So what’s to be done? You won’t be surprised to hear that EEI’s prescription is mainly focused on preserving utilities and their familiar business model. But is that the best thing for electricity consumers? Is that the best thing for the climate?

Image Credit and Source

Natural gas power plants can use about 20 percent less fuel when the sun is shining by injecting solar energy into natural gas with a new system being developed by the Department of Energy's Pacific Northwest National Laboratory. The system converts natural gas and sunlight into a more energy-rich fuel called syngas, which power plants can burn to make electricity. "Our system will enable power plants to use less natural gas to produce the same amount of electricity they already make," said PNNL engineer Bob Wegeng, who is leading the project. "At the same time, the system lowers a power plant's greenhouse gas emissions at a cost that's competitive with traditional fossil fuel power." PNNL will conduct field tests of the system at its sunny campus in Richland, Wash., this summer. With the U.S. increasingly relying on inexpensive natural gas for energy, this system can reduce the carbon footprint of power generation. DOE's Energy Information Administration estimates natural gas will make up 27 percent of the nation's electricity by 2020. Wegeng noted PNNL's system is best suited for power plants located in sunshine-drenched areas such as the American Southwest. Installing PNNL's system in front of natural gas power plants turns them into hybrid solar-gas power plants. The system uses solar heat to convert natural gas into syngas, a fuel containing hydrogen and carbon monoxide. Because syngas has a higher energy content, a power plant equipped with the system can consume about 20 percent less natural gas while producing the same amount of electricity. This decreased fuel usage is made possible with concentrating solar power, which uses a reflecting surface to concentrate the sun's rays like a magnifying glass. PNNL's system uses a mirrored parabolic dish to direct sunbeams to a central point, where a PNNL-developed device absorbs the solar heat to make syngas. Macro savings, micro technology About four feet long and two feet wide, the device contains a chemical reactor and several heat exchangers. The reactor has narrow channels that are as wide as six dimes stacked on top of each other. Concentrated sunlight heats up the natural gas flowing through the reactor's channels, which hold a catalyst that helps turn natural gas into syngas. The heat exchanger features narrower channels that are a couple times thicker than a strand of human hair. The exchanger's channels help recycle heat left over from the chemical reaction gas. By reusing the heat, solar energy is used more efficiently to convert natural gas into syngas. Tests on an earlier prototype of the device showed more than 60 percent of the solar energy that hit the system's mirrored dish was converted into chemical energy contained in the syngas. Lower-carbon cousin to traditional power plants PNNL is refining the earlier prototype to increase its efficiency while creating a design that can be made at a reasonable price. The project includes developing cost-effective manufacturing techniques that could be used for the mass production. The manufacturing methods will be developed by PNNL staff at the Microproducts Breakthrough Institute, a research and development facility in Corvallis, Ore., that is jointly managed by PNNL and Oregon State University. Wegeng's team aims to keep the system's overall cost low enough so that the electricity produced by a natural gas power plant equipped with the system would cost no more than 6 cents per kilowatt-hour by 2020. Such a price tag would make hybrid solar-gas power plants competitive with conventional, fossil fuel-burning power plants while also reducing greenhouse gas emissions. The system is adaptable to a large range of natural gas power plant sizes. The number of PNNL devices needed depends on a particular power plant's size. For example, a 500 MW plant would need roughly 3,000 dishes equipped with PNNL's device. Unlike many other solar technologies, PNNL's system doesn't require power plants to cease operations when the sun sets or clouds cover the sky. Power plants can bypass the system and burn natural gas directly. Though outside the scope of the current project, Wegeng also envisions a day when PNNL's solar-driven system could be used to create transportation fuels. Syngas can also be used to make synthetic crude oil, which can be refined into diesel and gasoline than runs our cars. Image Credit and Source

Though they be but little, they are fierce. The most powerful batteries on the planet are only a few millimeters in size, yet they pack such a punch that a driver could use a cellphone powered by these batteries to jump-start a dead car battery -- and then recharge the phone in the blink of an eye. Developed by researchers at the University of Illinois at Urbana-Champaign, the new microbatteries out-power even the best supercapacitors and could drive new applications in radio communications and compact electronics. Led by William P. King, the Bliss Professor of mechanical science and engineering, the researchers published their results in the April 16 issue of Nature Communications. "This is a whole new way to think about batteries," King said. "A battery can deliver far more power than anybody ever thought. In recent decades, electronics have gotten small. The thinking parts of computers have gotten small. And the battery has lagged far behind. This is a microtechnology that could change all of that. Now the power source is as high-performance as the rest of it." With currently available power sources, users have had to choose between power and energy. For applications that need a lot of power, like broadcasting a radio signal over a long distance, capacitors can release energy very quickly but can only store a small amount. For applications that need a lot of energy, like playing a radio for a long time, fuel cells and batteries can hold a lot of energy but release it or recharge slowly. "There's a sacrifice," said James Pikul, a graduate student and first author of the paper. "If you want high energy you can't get high power; if you want high power it's very difficult to get high energy. But for very interesting applications, especially modern applications, you really need both. That's what our batteries are starting to do. We're really pushing into an area in the energy storage design space that is not currently available with technologies today." The new microbatteries offer both power and energy, and by tweaking the structure a bit, the researchers can tune them over a wide range on the power-versus-energy scale. The batteries owe their high performance to their internal three-dimensional microstructure. Batteries have two key components: the anode (minus side) and cathode (plus side). Building on a novel fast-charging cathode design by materials science and engineering professor Paul Braun's group, King and Pikul developed a matching anode and then developed a new way to integrate the two components at the microscale to make a complete battery with superior performance. With so much power, the batteries could enable sensors or radio signals that broadcast 30 times farther, or devices 30 times smaller. The batteries are rechargeable and can charge 1,000 times faster than competing technologies -- imagine juicing up a credit-card-thin phone in less than a second. In addition to consumer electronics, medical devices, lasers, sensors and other applications could see leaps forward in technology with such power sources available. "Any kind of electronic device is limited by the size of the battery -- until now," King said. "Consider personal medical devices and implants, where the battery is an enormous brick, and it's connected to itty-bitty electronics and tiny wires. Now the battery is also tiny." Now, the researchers are working on integrating their batteries with other electronics components, as well as manufacturability at low cost. "Now we can think outside of the box," Pikul said. "It's a new enabling technology. It's not a progressive improvement over previous technologies; it breaks the normal paradigms of energy sources. It's allowing us to do different, new things." The National Science Foundation and the Air Force Office of Scientific Research supported this work. King also is affiliated with the Beckman Institute for Advanced Science and Technology; the Frederick Seitz Materials Research Laboratory; the Micro and Nanotechnology Laboratory; and the department of electrical and computer engineering at the U. of I.

Image Credit and Source

This isn’t a leak. It isn’t a timid flow. It’s a flood. I’m talking about the criticism of Monsanto’s so-called science of genetically-engineered food. For the past 20 years, independent researchers have been attacking Monsanto science in various ways, and finally the NY Times has joined the crowd. But it’s the way Mark Bittman, lead food columnist for the Times magazine, does it that really the crashes the whole GMO delusion. Writing in his April 2 column, “Why Do G.M.O.’s Need Protection?”, Bittman leads with this: “Genetic engineering in agriculture has disappointed many people who once had hopes for it.” As in: the party’s over, turn out the lights. Bittman explains: “…genetic engineering, or, more properly, transgenic engineering – in which a gene, usually from another species of plant, bacterium or animal, is inserted into a plant in the hope of positively changing its nature – has been disappointing.” As if this weren’t enough, Bittman spells it out more specifically: “In the nearly 20 years of applied use of G.E. in agriculture there have been two notable ‘successes,’ along with a few less notable ones. These are crops resistant to Monsanto’s Roundup herbicide (Monsanto develops both the seeds and the herbicide to which they’re resistant) and crops that contain their own insecticide. The first have already failed, as so-called superweeds have developed resistance to Roundup, and the second are showing signs of failing, as insects are able to develop resistance to the inserted Bt toxin — originally a bacterial toxin — faster than new crop variations can be generated.” Bittman goes on to write that superweed resistance was a foregone conclusion; scientists understood, from the earliest days of GMOs, that spraying generations of these weeds with Roundup would give us exactly what we have today: failure of the technology to prevent what it was designed to prevent. The weeds wouldn’t die out. They would retool and thrive. “The result is that the biggest crisis in monocrop agriculture – something like 90 percent of all soybeans and 70 percent of corn is grown using Roundup Ready seed – lies in glyphosate’s inability to any longer provide total or even predictable control, because around a dozen weed species have developed resistance to it.” Glyphosate is the active ingredient in Roundup. Just as the weeds developed resistance and immunity to the herbicide, insects that were supposed to be killed by the toxin engineered into Monsanto’s BT crops are also surviving. Five years ago, it would have been unthinkable that the NY Times would print such a complete rejection of GMO plant technology. Now, it’s “well, everybody knows.” The Times sees no point in holding back any longer. Of course, if it were a newspaper with any real courage, it would launch a whole series of front-page pieces on this enormous failure, and the gigantic fraud that lies behind it. Then the Times might actually see its readership improve. Momentum is something its editors understand well enough. You set your hounds loose on a story, you send them out with a mandate to expose failure, fraud, and crime down to their roots, and you know that, in the ensuing months, formerly reticent researchers and corporate employees and government officials will appear out of the woodwork confessing their insider knowledge. The story will deepen. It will take on new branches. The revelations will indict the corporation (Monsanto), its government partners, and the scientists who falsified and hid data. In this case, the FDA and the USDA will come in for major hits. They will backtrack and lie and mis-explain, for a while, and then, like buds in the spring, agency employees will emerge and admit the truth. These agencies were co-conspirators. And once the story unravels far enough, the human health hazards and destruction wreaked by GMOs will take center stage. All the bland pronouncements about “nobody has gotten sick from GMOs” will evaporate in the wind. It won’t simply be, “Well, we never tested health dangers adequately,” it’ll be, “We knew there was trouble from the get-go.” Yes, the Times could make all this happen. But it won’t. There are two basic reasons. First, it considers Big Ag too big to fail. There is now so much acreage in America tied up in GMO crops that to reject the whole show would cause titanic eruptions on many levels. And second, the Times is part of the very establishment that views the GMO industry as a way of bringing Globalism to fruition for the whole planet. Centralizing the food supply in a few hands means the population of the world, in the near future, will eat or not eat according to the dictates of a few unelected men. Redistribution of basic resources to the people of Earth, from such a control point, is what Globalism is all about: “Naturally, we love you all, but decisions must be made. You people over here will live well, you people over there will live not so well, and you people back there will live not at all. “This is our best judgment. Don’t worry, be happy.” Image Credit and Source

|

RSS Feed

RSS Feed